Introduction

A key component of deploying applications to the cloud with a Devops mindset is ensuring that you are keeping up to date with all of the latest patches and changes that are applicable to your environment. This can take the form of using Managed Services from AWS and others that take care of some of the underlying updates for you, or provide maintenance windows for applying patches and security fixes.

For other services where you need the flexibility of running your own EC2 instances, keeping them up to date with the latest patches and fixes can be a challenge. AWS offers a number of options for performing this task, from providing the Latest AMI’s with patches applied while still staying within a product family (for example staying within Ubuntu 16.04 LTS as a short term action before moving to Ubuntu 18.04 LTS, to using AWS Systems Manager to perform automated patching on a regular cadence to ensure that your instances are up to date.

One of the main recommendations we make to our customers is that patches are applied to a test or staging environment before they are rolled out to production, so that the compatibility can be confirmed between the application updates, operating system updates and your own code that may be running on the instance.

Once these changes have been validated to work, then the same set of patches can be applied to your production environment. This is critical to ensure that any patches that have been released in between your initial testing and your roll out to production aren’t included, as they may not have been tested with your application.

This approach works well and provides security of knowing that your application and instances are up to date, but it does also require manual intervention from Devops engineers to ensure compliance and compatibility.

This is where automated testing comes into play and will reduce manual intervention requirements, while providing required testing.

At CirrusHQ we pride ourselves on delivering your workloads with these capabilities introduced from the outset, please do contact us to find out more about our services.

Automated Compliance

When working on compliance with industry standards or internal compliance requirements, a number of tools and policies are normally set up to clean up standard instances and make sure that each image that is deployed confirms to a set of standards.

A good example of this is the AWS Best Practices for hardening AMIs before deployment.

Other AWS Tools such as Amazon Inspector that can run a number of tests against your instances and provide findings for actions to be taken.

This document describes a way of including these best practices and the benefits of Amazon Inspector in an automated way using EC2 Image Builder.

EC2 Image Builder

AWS provides the EC2 Image Builder service for creating your own customised AMIs with all of your own software installed, best practice security and compliance applied and tests run against your instances, all through an automated pipeline that is repeatable and can be run on a schedule to ensure your deployments have the latest patches and fixes that are also tested.

Overview

The EC2 Image builder is designed to create Golden AMIs for your application software. These are Amazon Machine Images that have been customised for use in your specific use case and have your set of applications and code installed. As an example you could take one of Amazon’s patched images for Amazon Linux 2 with EBS volume support, install php and your code, as well as other packages, then run security scripts against it to harden the image, run some test, and at the end of the process you will have a Golden AMI that can then be used anywhere you need to run your application.

Having an AMI that is pre-updated, hardened and ready to go, makes launching new instances via Auto-Scaling groups much easier and quicker.

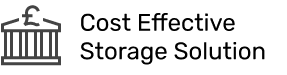

EC2 Image builder is split into a number of stages. These are Build, Validate and Test. These are all performed in an Image Pipeline, which is run through to create the AMI at the end.

Build Stage

Within the build stage is where you customise the AWS provided Image to meet your needs. There are a number of different actions you can take here.

The full build stage is described as a Recipe, that can be reused in other places. The individual actions within that Recipe are called Components, and are also reusable.

Each of these components can be set up separately and can be reused as needed. An example below is a command to update a linux instance, while excluding a specific package.

name: UpdateMyLinux action: UpdateOS onFailure: Abort maxAttempts: 3 inputs: exclude: - ec2-hibinit-agent

Components can also be created and controlled through cloudformation

The build step is the main section of the Image builder. This is where your application will be set up to work as you need it.

Validation Stage

Within the validation stage, you can run tasks that will ensure that your AMI is up to the standards you require. One example of this is by using AWS Inspector to run against your AMI and ensure that all of your Inspector requirements are met.

If one of the requirements fails, then you can stop the Image Builder process at that point and manually notify your team to investigate the issue.

Test Stage

The test stage is further used to ensure that your Image is built to the way you require. This can encompass unit testing and as much or as little examples as you need. It may be for example that you want to make sure that when a new instance is spun up you can connect to the application on a specific endpoint, and you can write a test to check this is working correctly.

Then, as with the Validation stage, you can stop or proceed with the build as needed.

Automation using CloudFormation and Code Pipeline

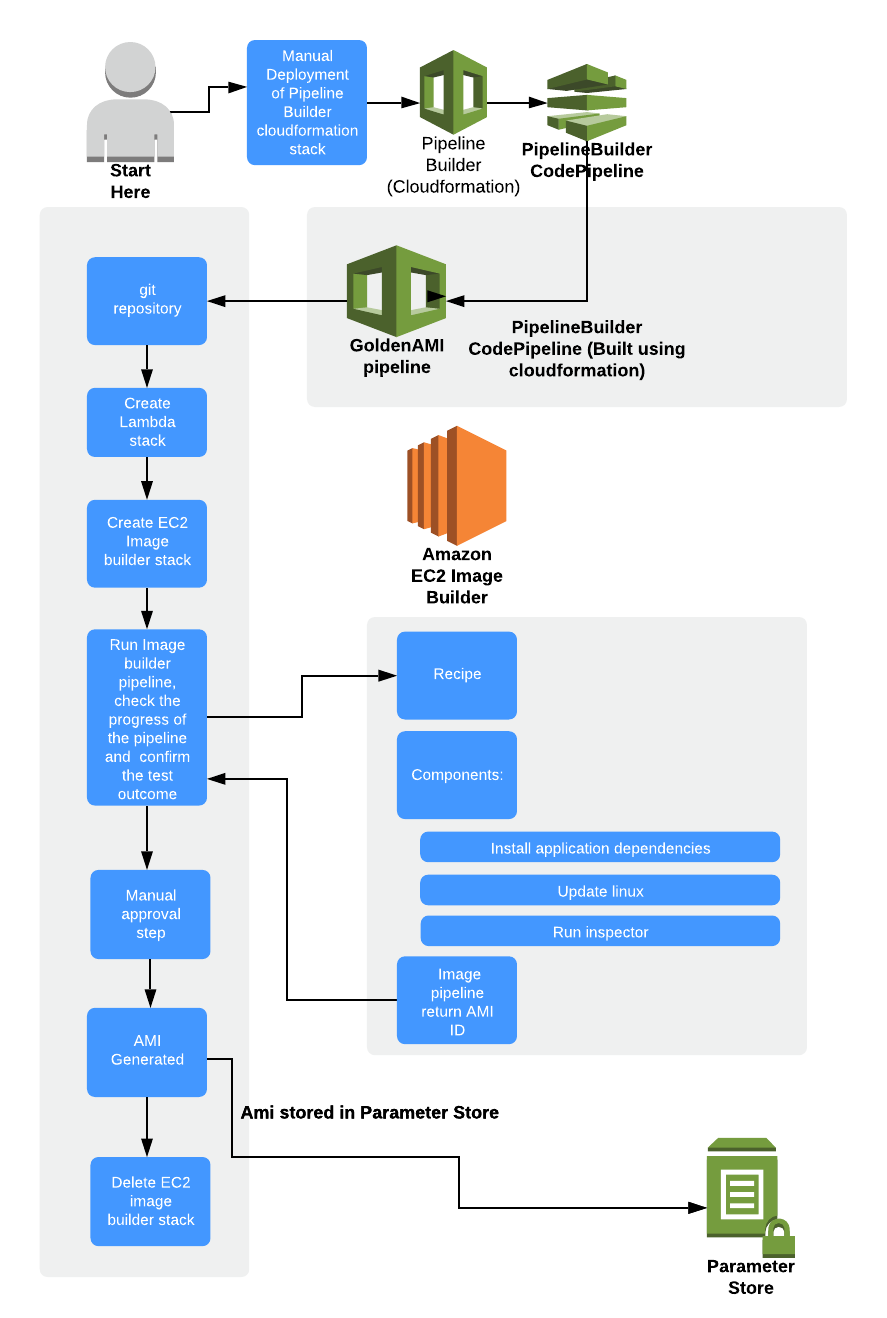

The Image Builder process provides a lot of automation for creating your images, however you can also go a few steps further and automate the full end to end process for your deployment, so that when you deploy new code, a full new Golden AMI is created with your code installed, so that you can be sure that all of the latest configuration updates and operating systems patches have been installed and your code has been validated and tested before you deploy it.

As the EC2 image builder creates a pipeline that takes in an AMI and applies recipes and components, then outputs another AMI, this process can fit very well within a Code Pipeline by using the Cloudformation support released for EC2 Image Builder.

In the example below, we create a CodePipeline setup that will connect to a Github repository, download the Infrastructure Cloudformation as well as downloading the Application artifacts, then goes through a process to create the EC2 Image Builder Pipeline, running the Image Pipeline as described to this point, then outputs an AMI into the AWS Parameter Store, so that other AWS products can access it (such as another Cloudformation deployment).

As you can see, there are a wide range of customisations that could be done at this point (in this example, we have also created a Lambda Stack deployment for further control of the Image Pipeline stages and for cleanup). You have the full power of Cloudformation and Code Pipeline to take the AMI that is created and use it for any number of processes. This allows you to have confidence that your deployments are using a pre-prepared, hardened and up to date Image containing your application.

To further enhance the automation, the EC2 Image Builder pipeline can be configured to be run on a regular schedule, bringing in the latest configurations and updates for each section as required, so that even if you have not rolled out any new code recently, your instances are still kept up to date with the latest patches and your tests have been run to ensure your instances are working as required.

Cost

AWS EC2 Image Builder is itself free, but each of the resources you use will be chargeable. So for example, you will be responsible for paying the costs associated with any logs, the use of AWS Inspector, the time the test image is running in your account, and any other resources used during the creation of the AMI. However, as this process is automated, the costs are kept to a reasonable level and any unused resources are removed when required.

If you would like to know more from CirrusHQ then we’d be delighted to have a conversation regarding your DevOps practises and how we can automate compliance, please visit our contact page